Property valuation used to be slower, more manual, and heavily dependent on local comps and an appraiser’s on-ground judgment. That approach still matters, but the market has changed. Listings update faster, neighborhoods shift more quickly, and buyers respond to signals that are not always visible in sales records.

That is why automated property valuation models, often called AVMs, have become a significant component of modern valuation workflows. An AVM is commonly defined as a real estate valuation approach that uses statistical modeling and software to estimate a property’s value.

For appraisers, real estate analysts, and data scientists, the real question is not whether AVMs exist. It is about making them better, especially in markets where data quality is uneven and local dynamics move quickly. The biggest lever is the same one that has improved models in other industries: big data real estate inputs that expand what the model can “see.”

Traditional valuation vs machine learning models

Traditional valuation methods are built on a small set of trusted pillars: comparable sales, adjustments for property features, and local market context. They work well when comps are recent, markets are stable, and property data is complete.

Machine learning models do not replace this logic. They scale it.

Where traditional methods may look at a few dozen comps, AVMs can learn patterns from millions of transactions, price changes, and property feature combinations. The benefit is speed and consistency. The tradeoff is that the model is only as strong as its data coverage, feature accuracy, and its handling of unusual properties.

A practical way to view it:

- Appraisals are excellent for final decisions, lending, and edge cases.

- AVMs are excellent for monitoring, screening, portfolio valuation, and fast scenario analysis.

In many teams, the best workflow is hybrid: AVM for baseline and flags, appraiser for verification and judgment.

How automated property valuation models work

Most AVMs follow the same pipeline, even if the algorithms differ.

Step 1: Collect and standardize data

AVMs typically blend:

- public records and tax assessment inputs

- MLS and listing data (where available)

- user-submitted corrections and updates

- recent market behavior and local trends

Zillow explains that its Zestimate incorporates public, MLS, and user-submitted data, along with home facts, location, and market trends, and it is not an appraisal. Zillow and Redfin note that their estimates use MLS data and provide published accuracy metrics for on-market vs. off-market homes.

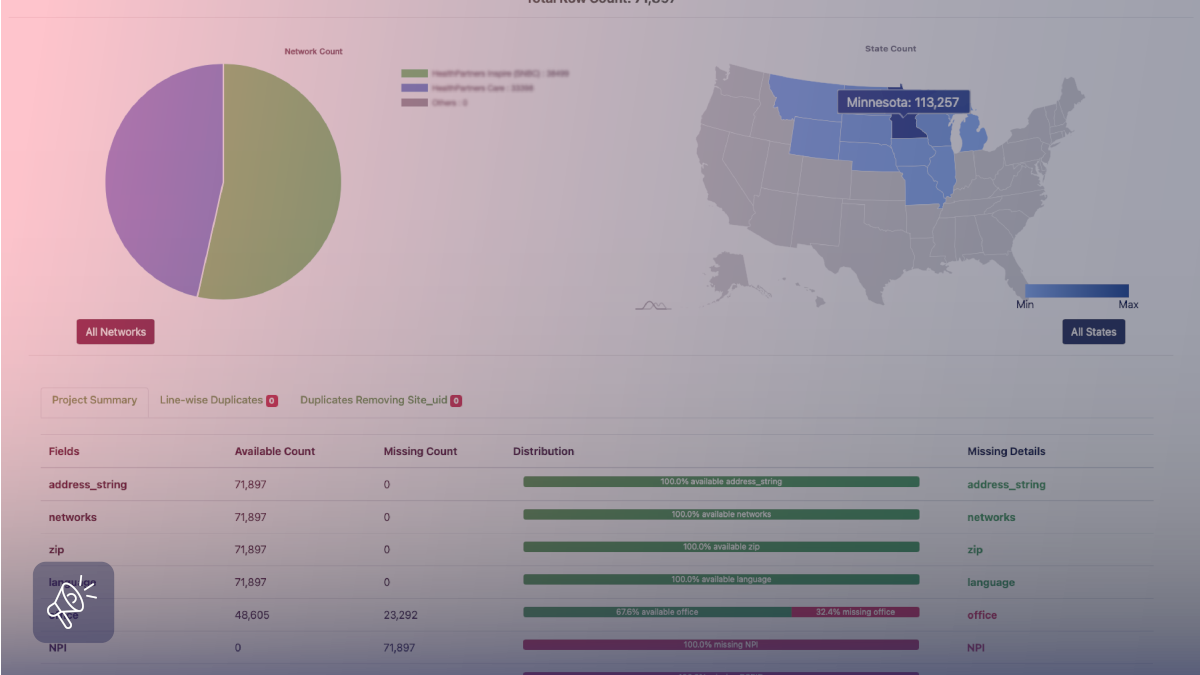

This is where data teams spend most of their real effort: resolving address issues, cleaning duplicates, handling missing values, and standardizing inconsistent feature fields.

Step 2: Build features the model can learn from

A strong AVM is not just “bed/bath plus price history.” It needs a wide feature set that reflects how buyers actually price homes.

Key features scraped for valuation

Web data can fill gaps that public records and basic datasets often miss, especially around property condition and amenity signals. Common valuation features include:

Property fundamentals

Square footage, lot size, bedrooms, bathrooms, year built, property type, parking, floors, and layout hints.

Condition and quality signals

Renovation mentions “recently updated” indicators, finish-level descriptions, building age vs. remodel age, photos (when used ethically and lawfully), and listing language patterns.

Amenities and micro-features

Elevator, pool, gym, clubhouse, power backup, security, view, balcony, furnishing level, pet-friendly rules, HOA, and building services.

Pricing behavior

List price changes, days on market, relisting patterns, sale-to-list ratios in the micro-area, and nearby inventory pressure.

Location intelligence

School access, transit proximity, POIs, commute-time bands, neighborhood risk flags (flood, noise corridors).

The key is not collecting “more fields.” The key is to collect the few fields that reduce uncertainty about your target market.

How external data improves accuracy

When people talk about AVM accuracy, they often focus on the model type. In practice, accuracy improves most when the model gets better external signals.

Here are four external datasets that routinely help:

1) Local supply and demand pressure

Inventory trend by micro-area, new construction pipeline, and rental vacancy signals. This helps the model avoid overreacting to a few noisy sales.

2) Macro indicators that change affordability

Interest-rate shifts, employment shifts, and income-growth proxies do not directly determine the price of a home, but they shift the ceiling on what buyers can pay.

3) Risk and livability layers

Flood exposure, heat risk, safety signals, and sand school context. These features often explain why two similar homes are priced differently.

4) Unstructured text signals

Listing descriptions, neighborhood discussions, and reviews. This is where techniques used in sentiment analysis on product reviews start to look surprisingly useful in real estate, because text often reveals the “why” behind buyer willingness to pay.

Measuring AVM accuracy in the real world

Accuracy is not a single number. It changes by:

- whether the home is on-market or off-market

- data availability in the region

- property uniqueness

- market volatility

Both Zillow and Redfin publish median error rates and separate on-market vs. off-market performance. Zillow states a nationwide median error rate around 1.83% for on-market homes and 7.01% for off-market homes (as reported on its Zestimate pages). Zillow and Redfin report median error rates of around 2.00% for on-market homes and 7.69% for off-market homes.

Two practical takeaways for analysts:

- Off-market is harder because the model has fewer fresh signals.

- You should always treat AVMs as ranges, not single-point truth, especially for off-market properties.

Example: Redfin and Zillow valuation methods

No public AVM fully reveals every modeling detail, but they do describe the data types and broad approach.

Zillow and the Neural Zestimate

Zillow’s tech write-up describes the “Neural Zestimate” as an estimate for off-market homes, incorporating property data such as sales transactions, tax assessments, public records, and home details like square footage and location, at a very large national scale.

Redfin Estimate and MLS access

Redfin emphasizes its use of MLS data and publishes accuracy metrics by on-market and off-market segments, which is a helpful reminder that data freshness matters as much as algorithm choice.

The bigger lesson here is not “copy Zillow” or “copy Redfin.” It is to build a model that matches your market structure and the data you can reliably refresh.

Zestimate alternatives and where they fit

When people say “Zestimate alternatives,” they often mean online consumer tools. In professional workflows, “alternatives” also include lender-grade and enterprise AVMs.

Common categories include:

- Portal estimates (useful for quick benchmarks and consumer context)

- Brokerage estimates (often, tighter MLS integration in certain markets)

- Enterprise AVMs used by lenders, insurers, and investors

For example, CoreLogic markets an automated valuation solution (Total Home Value) positioned for large-scale valuation use cases.

When selecting an AVM source, the decision usually comes down to:

- coverage and refresh frequency

- explainability (can you show why the value moved?)

- Bias and data gaps in your target regions

- ability to ingest your custom features (renovation, amenities, local risk layers)

Future trends: AI-driven property appraisal

The next wave of valuation improvements is less about swapping one regression model for another and more about expanding what the model can interpret.

Multimodal valuation

More models are learning from structured data, images, floor plans, and text. That is where “condition” becomes measurable, not just guessed.

More transparent ranges, not single numbers

Users are starting to expect confidence bands and “what would change the estimate” explanations, because it is closer to how real underwriting works.

Faster adaptation in volatile markets

In changing markets, the best models update quickly without overfitting to short-term noise. This is often a data engineering problem first, and an ML problem second.

Better compliance and governance

As web-extracted features become more common, teams are investing more in provenance, permission-aware collection, and audit trails.

How Grepsr supports automated property valuation workflows

Most AVM projects struggle in the same place: keeping the data pipeline reliable as listings change every day, sources drift, and fields stop matching your schema. If your inputs are inconsistent, even a strong model starts producing shaky valuations.

Grepsr fixes that foundation by delivering structured, model-ready datasets that capture listings and historical listing changes across multiple portals, so your valuation inputs stay current and comparable over time. Their write-up on tracking property prices across portals explains why multi-source price history is often the difference between a clean valuation signal and a noisy one.

From there, Grepsr can enrich and normalize property features, amenities, and location signals, then run quality checks so the dataset is ready for training and refresh cycles. This is the same “keep it consistent, keep it fresh” approach shown in their Real Estate Data Intelligence customer story, where reliable property datasets are maintained without constant manual rework. If your use case is specifically valuation, the Accurate Property Value Assessment with Data workflow is the closest match to how these datasets plug into automated valuation and monitoring.

Conclusion

Automated property valuation is no longer a niche tool. It is a core layer for appraisers, analysts, and data teams who need speed, scale, and consistency.

The best way to improve AVM accuracy is not to chase a trendy algorithm. It is to improve input quality, expand feature coverage with relevant external signals, and measure performance honestly by segment, especially on-market vs off-market homes.

When you treat AVMs as decision support rather than decision replacement, big data becomes a real advantage, not just a buzzword.

FAQs

What is an automated valuation model (AVM)?

An AVM is a software-driven valuation approach that uses statistical modeling and data inputs to estimate property value.

Why are AVMs less accurate for off-market homes?

Off-market properties typically have fewer fresh signals, such as active listing updates and current buyer feedback, which increases uncertainty. Zillow and Redfin both report higher median error rates for off-market estimates than for on-market estimates.

What data improves valuation accuracy the most?

Accurate property features, recent comps, listing change history, amenity details, and external location and risk layers usually produce the biggest gains.

Are Zestimates and Redfin Estimates appraisals?

No. Zillow explicitly states the Zestimate is not an appraisal.