Commercial real estate decisions are rarely lost because someone picked the wrong building. They are lost because the data was incomplete, outdated, or disconnected from the real question.

A strong commercial real estate data strategy fixes that. It gives brokers, investors, and analysts a repeatable way to collect the right datasets, run consistent CRE analytics, and translate signals like rents, occupancy, pipeline, and tenant mix into decisions you can defend.

This guide breaks down the differences between residential and commercial data needs, where CRE data comes from, how to analyze commercial rent trends and occupancy, how scraped data fits into investment analysis, and what compliance looks like when you operate at scale.

Differences between residential and commercial data needs

Residential data is often transaction-heavy and relatively standardized. Commercial is not. Lease structures vary, tenant quality matters more, and “the market” can change block by block.

In practice, CRE teams need more than sales comps. They need:

- leasing context (asking rent vs effective rent, concessions, term length)

- space-specific context (floor, frontage, loading, ceiling height, parking ratios)

- tenant context (credit, industry exposure, renewal likelihood)

- operational context (capex history, maintenance patterns, utility constraints)

That is why your CRE strategy should start by defining the decision you are trying to improve: leasing, acquisition, portfolio risk, development feasibility, or brokerage prospecting. The data you collect should follow that purpose.

A simple CRE data stack that works

Most winning CRE strategies end up with four layers.

Layer 1: Asset and building fundamentals

This is your base truth: building specs, ownership signals, location, zoning, unit mix, GLA/NLA, parking, amenities, and any available history.

Layer 2: Market and comp signals

This is where office market data and retail comparables live: asking rents, effective rents where possible, vacancy and availability, absorption, pipeline, and sublease inventory.

Layer 3: Tenant and demand signals

Tenant mix, categories, anchor presence, co-tenancy effects, footfall proxies for retail, and local employment drivers for office and industrial.

Layer 4: Alternative signals

News, construction permits, business openings/closures, mobility or POI changes, and localized risk layers. These are often the early-warning systems.

When these layers are connected, your analysis becomes consistent. When they are separate, every analyst builds a new story from scratch.

Sources for commercial real estate data

A healthy data strategy combines proprietary sources with public datasets and fills gaps through controlled web extraction.

Private and marketplace sources

Many CRE teams rely on industry platforms and marketplaces for listings, comps, and market context. CoStar positions itself as a commercial real estate information, analytics, and news platform for CRE professionals. LoopNet is a major commercial real estate marketplace for discovering properties for sale and lease.

Important note for strategy (not legal advice): Some platforms are licensed products with strict usage rules. Treat them as licensed inputs, not “free web pages,” and design your workflows accordingly.

Government and public databases

Public datasets are extremely valuable in CRE because they give you macro drivers and local pipeline signals.

Good starting points:

- Census Bureau APIs for many datasets are accessible through its developers portal.

- Building permits for construction and pipeline signals (useful even if the Building Permits Survey is residential-focused, it still helps measure local construction momentum).

- FRED API for interest rates, inflation, employment proxies, and macroeconomic indicators that impact cap rates and leasing demand.

- BLS Public Data API for labor market and economic series that correlate with office demand and retail spending power.

- BEA API for GDP, industry, and regional economic data that can strengthen market narratives and scenario models.

If you are building a repeatable model, public sources are often the best “backbone” because they are stable and documented.

Analyzing commercial rent trends and occupancy

Rent trends and occupancy are where strategies become real, because they show whether demand is strengthening or weakening.

Start with the right definitions

Even experienced teams get tripped up by inconsistent terms:

- occupancy vs vacancy vs availability

- asking rent vs effective rent

- leased space vs occupied space

- in-place rent vs market rent

- direct vacancy vs sublease supply

If your portfolio spans multiple cities, lock these definitions early and enforce them across datasets.

Track rent like a timeline, not a snapshot

The most useful rent analysis is directional:

- rent growth by micro-market and building class

- time-to-lease and time-on-market

- concessions trend (often an early softness signal)

- renewal vs new lease spreads

For retail, retail real estate trends are often driven by tenant mix, anchor strength, and local footfall. Even without perfect footfall data, a consistent proxy (POI density, business openings, mobility indicators where available) can improve your read.

Occupancy signals that matter for decisions

Instead of only reporting occupancy, use it to trigger actions:

- rising vacancy + rising concessions = pricing pressure likely

- stable occupancy + rising rents = pricing power and scarcity

- rising sublease inventory = demand risk for office

Using scraped data for investment analysis

Scraped data is most valuable when it fills what licensed products and public data cannot.

Common CRE use cases include:

- tracking active listings and price changes to spot motivated sellers

- monitoring new tenant announcements and store openings

- mapping competitor leasing behavior in a corridor

- building a tenant mix dataset across shopping centers

- tracking construction updates, delays, and local approvals via public notices

The key is discipline: scrape only what you need, normalize it, and keep refresh cycles predictable.

If you want a helpful analogy, treat your market like automated stock monitoring online. You are not checking prices once a quarter. You are building an alert system that tells you when something important changed, and why.

Compliance and ethical considerations in CRE data

CRE data strategy fails fast when compliance is an afterthought, especially when teams start combining sources.

Focus on four rules of thumb:

Respect licensing and terms

Some datasets are licensed products. Build your workflows so usage aligns with contracts and permissions.

Avoid personal data unless you have a lawful basis

If your pipeline touches personal data (names, direct contact info, identifiable profiles), you need a lawful basis and documentation. GDPR Article 6 lays out lawful bases for processing personal data. If you operate under UK GDPR, the ICO provides guidance on using “legitimate interests” as a lawful basis.

Keep provenance

Store where each field came from, when it was collected, and what transformations were applied. This protects analysts and improves trust.

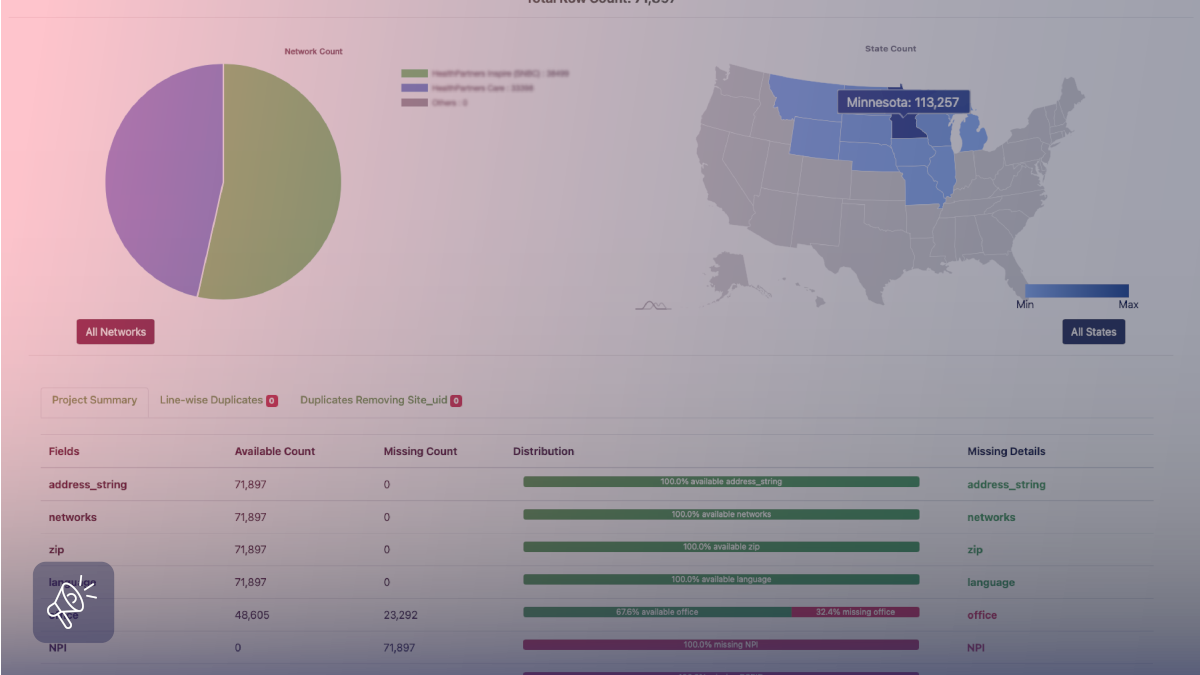

Build quality control as a feature

Bad CRE data is worse than no data at all. Deduping, entity resolution, geocoding checks, and anomaly detection should be part of the pipeline, not a cleanup step.

A practical step-by-step CRE data strategy

Step 1: Define the decision and the KPI

Examples: increase leasing velocity, find undervalued assets, reduce vacancy risk, and identify emerging corridors.

Step 2: Design the data schema once

Standardize fields for building, suite, lease, tenant, listing, location, and time-series metrics.

Step 3: Choose your source mix

Licensed platforms for depth, public datasets for stability, and web extraction for coverage gaps.

Step 4: Build a refresh cadence

Daily for listings, weekly for changes and comps, monthly for market summaries, quarterly for macro narratives.

Step 5: Operationalize insights

Dashboards, alerts, scorecards, and a clean handoff to investment committees, brokers, or asset managers.

How Grepsr supports CRE data strategy

A CRE strategy is only as good as its ability to stay fresh. Listings change, formats drift, and multi-source joins can break quietly, which is usually when teams end up spending more time fixing inputs than running deal-moving analysis.

Grepsr supports CRE teams with managed, structured web data through its Data-as-a-Service delivery model, with built-in validation and consistency controls to keep your datasets in the same shape even when sources change. In the Real Estate Data Intelligence customer story, the focus is exactly on this problem: avoiding gaps across residential and commercial property data by replacing brittle in-house crawling with a reliable pipeline. And if your CRE strategy depends on staying ahead of future supply, this piece on data-driven project tracking is a solid example of how structured development data supports faster, clearer decisions. When you want to map sources, refresh frequency, and output formats to your stack, the simplest next step is the Contact Sales flow.

FAQs

What should a commercial real estate data strategy include?

A source plan (licensed + public + web extraction), a standardized schema, refresh cadence, QA rules, and outputs like dashboards and alerts aligned to business decisions.

What are reliable sources for CRE data?

Industry platforms and marketplaces are common inputs, and public sources such as Census APIs, BLS, BEA, and FRED are strong for macro- and regional-level drivers.

How do I analyze office market data effectively?

Use consistent definitions for occupancy and vacancy, track rent as a time series (not a snapshot), and monitor leading indicators such as concessions and sublease inventory.

Can scraped data be used for CRE investment analysis?

Yes, especially for listing changes, tenant movement, corridor monitoring, and local change detection, as long as your collection is permission-aware and compliance-first.