Web data extraction of large datasets is almost impossible with in-house capabilities. Learn why you need an external data provider.

If you’ve ever copied some data points from a certain website to your spreadsheet for analysis later, you’ve arguably performed web scraping. But that’s a really rudimentary way of doing it.

When things get a little hasslesome, you could always opt to build a crawler and let it do the grunt work for you. So, what really is the point of an external data provider? Should we lay this article to rest and move on to better things?

Of course not. Building a crawler is the easiest part of web data extraction. You begin to come across insurmountable hurdles when project requirements increase, and the sheer magnitude of data poses a serious challenge.

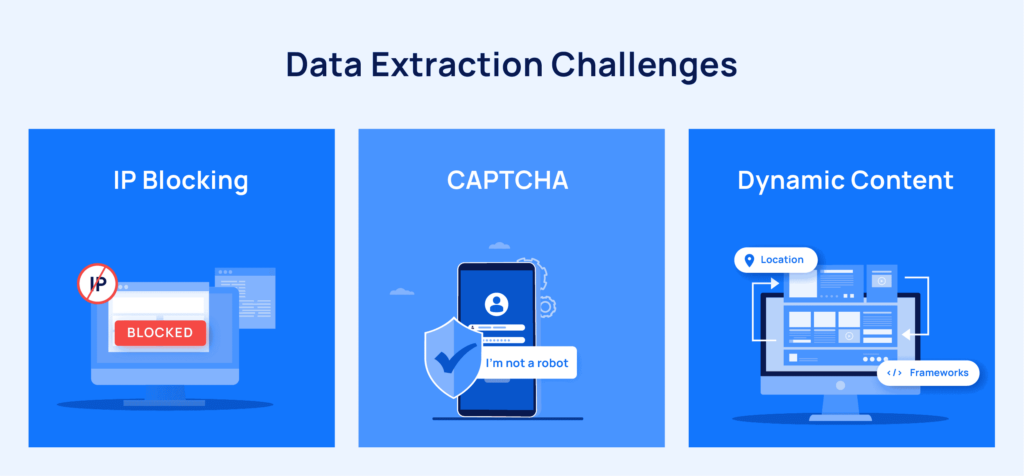

For starters, you may consider some indelible aspects of the job like captcha solving, tackling dynamic content, and automating proxy rotation.

If you witness the following telltale signs, it is time for you to outsource your web data needs.

1. When data extraction is not your main offering

We’ve seen this happen time and again. Businesses accord very little importance to web scraping and assign few resources to the task. As the project gathers steam, data extraction snowballs into a big operation, definitely not something the founders signed up for.

They come to us badgered, almost yearning for a respite.

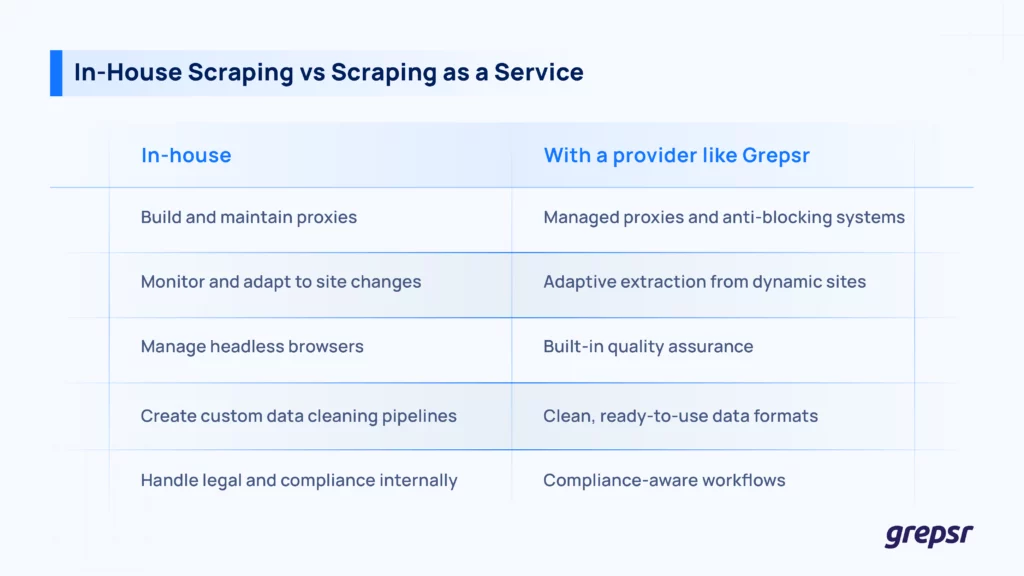

External data providers like Grepsr extract thousands of web pages in parallel and organize them in a neat machine-readable format. It is not much of a stretch to conclude that unless web data extraction is the core offering of your business, your engineering team and resources are better utilized elsewhere.

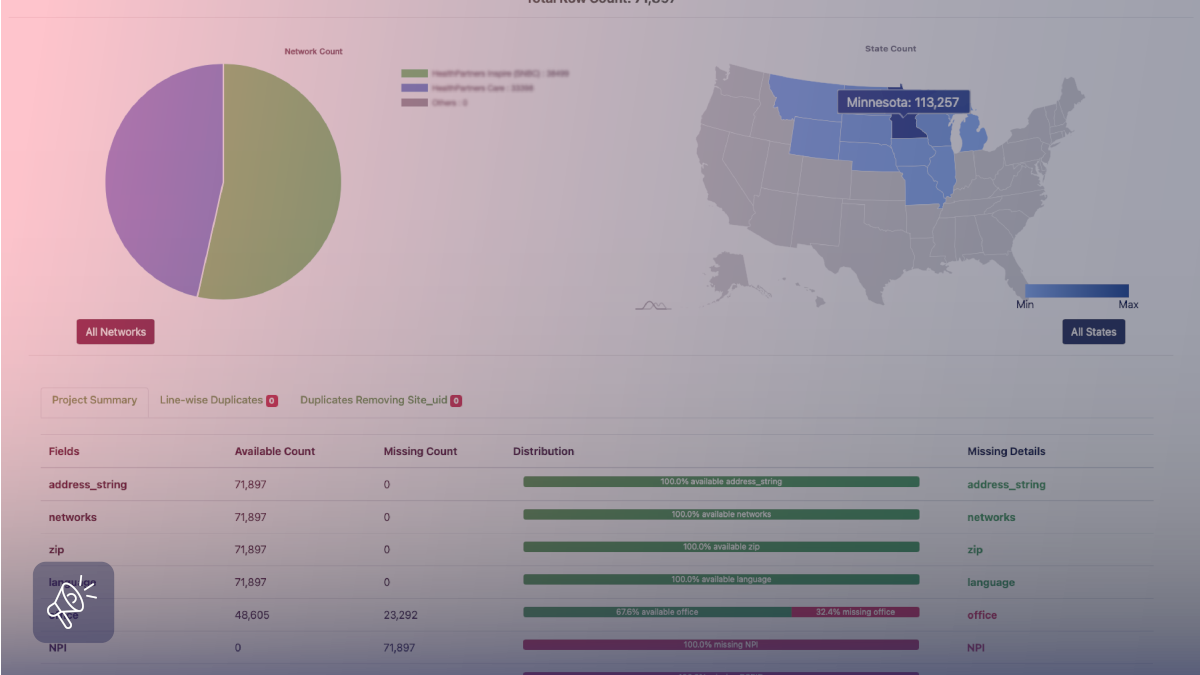

2. When you need data at scale

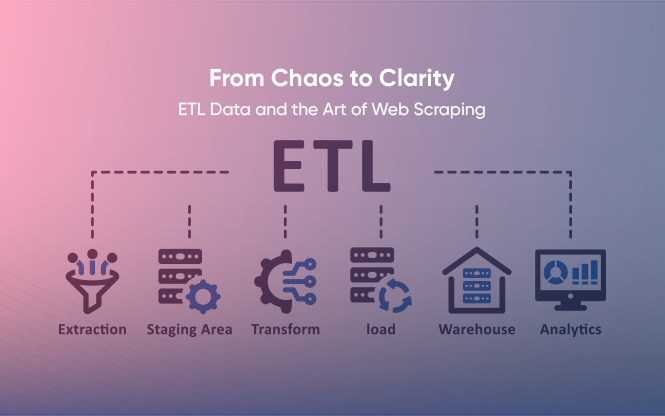

As we mentioned before, setting up the crawler is the easiest part of the data extraction process. As the scope of the project increases, things start getting a whole lot messier.

Changes to website structures, anti-bot mechanisms, and the very valid need for quality data under the aforementioned circumstances, make the job of any data acquisition team difficult.

Websites frequently make changes to their structures. For instance, the AJAX development technique allows a site to update content dynamically. Lazy loading images and infinite scrolling makes it easy for consumers to view more data but inhibits the scraper’s job.

Furthermore, the hostile nature of many source websites against bots adds to the worry of the scraping team because all of this puts the quality of data in jeopardy.

Managed data acquisition services like Grepsr deal with issues like these daily. So if you’re looking to scale your data extraction process, deciding on an external data provider is vital.

3. When you have inadequate technical resources

Large volumes of data are automatically accompanied by large volumes of problems. High-end servers, proxy services, engineers, software tools, and whatnot. A data extraction team needs a plethora of resources to ensure a high-quality data feed is entering your system. And, none of that comes cheap.

What’s more, onboarding additional personnel, training, and equipping them with resources not only burns a hole in your pocket but also snatches your attention away from your core business offering.

The question is, are you ready to bear that cost?

4. When you need quality data within a deadline

The fickle nature of websites along with their determination to block bots put the web scrapers in a bad position. Especially, when you don’t have the resources and skill sets to bypass these recurring issues.

When you need high-quality data on a regular basis, you cannot rely on DIY techniques to deliver. Ensuring high-quality data demands both automated and manual QA processes.

If you’ve ever worked in a data extraction company, then you know how frequently the crawlers break down. Often, engineers work round the clock to fix these crawlers and get them back on track.

In case your entire business operation is centered around quality data extracted at regular intervals, you should seriously consider a reliable data provider who can set up and maintain the crawlers even on complex websites. It is hard to think of another way to leverage high-quality data at scale.

5. When your web data needs are seasonal

Not all companies need data all the time. Say your organization has limited data needs. You use data only for the development of a new product, measurement of market trends during a certain time of the year, and analysis of the competition in a specific segment for a specific project.

Under these situations, the best course of action is to outsource your data extraction projects if and when the requirements crop up.

Data extraction is more than what meets the eye

Considering the time and money it takes to overcome data extraction hurdles, we recommend that you partner with an external data provider to take care of your web data needs.

Data firstcomers are often bewildered by the sheer scale at which we extract data. We once had a retail analytics company come to us for data pertaining to very specific needs. They needed the product prices of a few of their competitors on Amazon. It was a delight to show them that we could extract such data not just from Amazon, but from eBay, Walmart, and virtually every e-commerce website out there. At any frequency. At any scale.

Grepsr has accumulated extensive experience collecting web data at scale for enterprises that need it. Over the years we have learned and perfected advanced techniques to extract data even from the most troublesome websites.

If it’s time for you to scale, you now know whom to call.