Web scraping to Excel is the automated process of extracting data from websites and exporting it directly into Excel spreadsheets for analysis, visualization, and reporting. This technique combines web scraping tools with Excel’s data manipulation capabilities to transform unstructured web data into organized, actionable insights.

Web scraping and Excel go hand in hand. After extracting the data from the web, you can then organize this data in Excel to capture actionable insights.

The internet, by far, is the biggest source of information and data.

Juggling through multiple sites to analyze data can be quite irksome. If you are analyzing vast amounts of data, it is only prudent to organize the dataset in a scannable spreadsheet.

Let us show you how “web scraping” can automate the extraction of your required data and organize it in Excel so the insight you’re looking for is easy on the eyes and not hidden along with many other datasets on the web.

A brief overview of web scraping

Web scraping stands as a go-to method of automation for retrieving information from the internet.

In an era where every organization is looking to make data-driven decisions, web data has emerged as a must-have asset. For individuals and enterprises alike.

Web scraping is a valuable arsenal for you in circumstances where you need data at scale. In addition, it’s a handy tool to get access to the data from the internet, which may be hidden behind multiple links and pages.

Web scraping automatically navigates through the web pages, extracts relevant data and captures it for storage and application.

Moreover, you can extract the data in your desired format, as photos, links, and other types of data as in the source website.

Leveraging the power of web scraping allows you to access the insights and information to make informed decisions and get a macro view of the market dynamics.

How Web Scraping Works: Technical Overview

To get more details on web scraping, check this article on web scraping with python. Here is just a quick preview. Web scraping typically initiates with sending HTTP requests to a website, parsing the HTML content and then extracting the selected data. Some key components of web scraping are:

HTTP Requests

The scraper sends HTTP requests to target websites, mimicking how a browser loads web pages. These requests retrieve the HTML content containing your desired data.

Example: A price monitoring scraper sends requests to competitor product pages every 6 hours to track pricing changes.

HTML Parsing

The scraper analyzes the HTML structure to identify where specific data elements are located within the code. Parsing uses techniques like:

- CSS Selectors: Target elements by class names, IDs, or attributes (e.g., .product-price)

- XPath: Navigate through HTML hierarchy using path expressions

- Regular Expressions: Extract patterns like email addresses or phone numbers

Data Extraction

After you parse and analyze the data, web scraping then extracts the desired data from the parsed HTML. Depending on the complexity of the website and your extraction workflow, this can be achieved through various techniques such as XPath, CSS selectors, or regular expressions.

Data Storage and Export

Extracted data is structured and saved in formats like:

Databases: Direct storage in MySQL, PostgreSQL, or cloud databases

CSV: Comma-separated values compatible with all spreadsheet software

XLSX: Native Excel format preserving formatting and formulas

JSON: Structured data format for API integrations

or any other format that you request.

Exporting Scraped Data to Excel

Once you have successfully extracted the desired data, exporting it to Excel can provide a convenient and familiar format for further analysis. Excel offers powerful data manipulation and visualization capabilities, making it an ideal tool for working with scraped data.

To export scraped data to Excel, you can utilize various methods depending on your chosen web scraping mechanism.

Method 1: Python with Beautiful Soup and Pandas

Python offers the most flexible approach for custom web scraping projects.

Required libraries:

- Requests: Send HTTP requests

- Beautiful Soup: Parse HTML content

- Pandas: Convert data to DataFrames and export to Excel

Basic workflow:

- Import required libraries (import requests, BeautifulSoup, pandas)

- Send HTTP request to target URL

- Parse HTML with Beautiful Soup

- Extract data using CSS selectors or XPath

- Store data in a Pandas DataFrame

- Export DataFrame to Excel using df.to_excel(‘output.xlsx’)

Best for: Developers and data analysts comfortable with coding who need customized scraping logic.

Limitations: Requires programming knowledge, manual handling of anti-scraping measures, and ongoing maintenance for website changes.

For a detailed Python scraping tutorial, see our guide on web scraping with Python.

Method 2: Browser Extensions

Browser-based scraping extensions provide visual, point-and-click data extraction without coding.

How it works:

- Install the browser extension

- Navigate to the target website

- Click on data elements you want to extract

- The extension identifies similar elements across pages

- Export directly to Excel with one click

Example: Pline lets you select product names and prices visually, then automatically extracts matching data from all product listings.

Best for: Business users and non-technical teams who need quick data extraction from simple websites.

Limitations: Limited scalability for complex websites, manual setup for each scraping project, typically handles smaller data volumes.

Method 3: Managed Web Scraping Services

Fully managed services handle the entire extraction process—from configuration to delivery.

Service workflow:

- Submit data requirements (target websites, specific fields, update frequency)

- The service configures custom scrapers

- Data is automatically extracted on your schedule

- Receive cleaned data directly in Excel or via API

- Service maintains scrapers when websites change

Measurable benefit: Companies using managed services report 85% reduction in data acquisition costs compared to hiring dedicated scraping teams.

Best for: Enterprises requiring large-scale, ongoing data extraction without dedicating internal resources.

Key advantage: Professional services handle anti-scraping challenges, CAPTCHA solving, IP rotation, and legal compliance automatically.

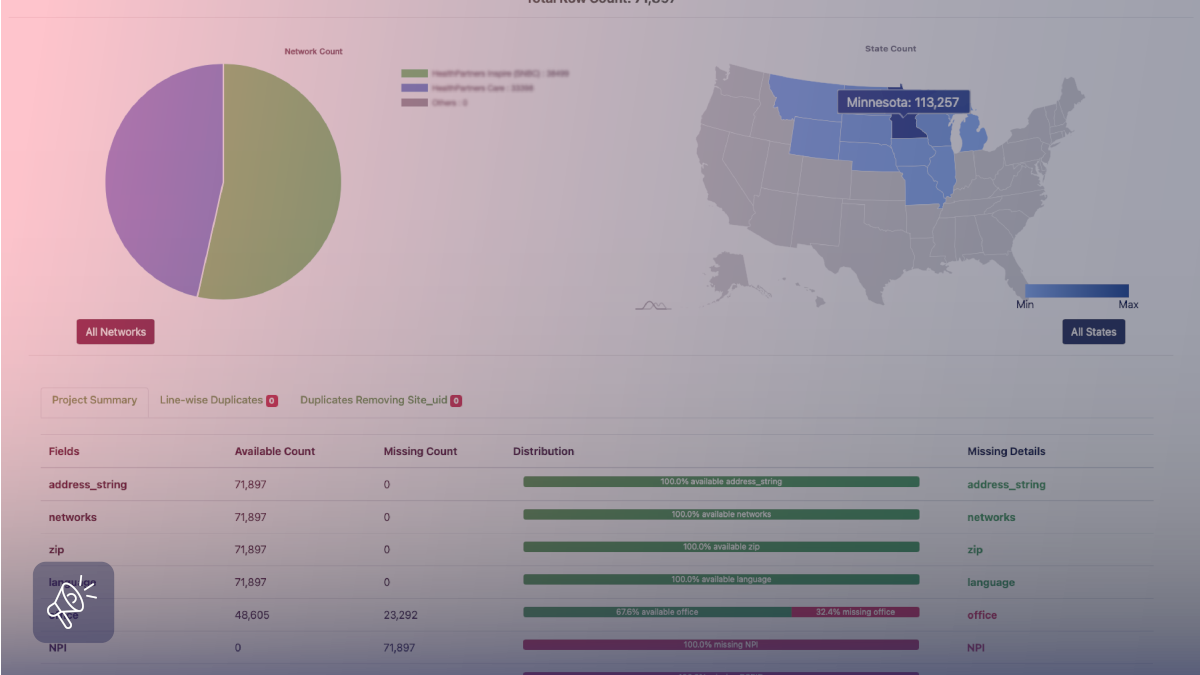

If you use a web scraping service like Grepsr, we can directly export the extracted data to Excel and give you the file without you having to do any sheet work. We provide a seamless integration with Excel, allowing you to effortlessly export and update scraped data in real-time.

To fully delegate your extraction overheads, we also provide custom data extraction services where you just need to share your requirements and we do the rest. Be it, extracting the data and storing it in an Excel or integrating them with your systems via an API.

Using ETL (Extract, Transform, Load) framework for Data Integration

To effectively utilize scraped data, Data Integration is key. ETL, or Extract, Transform, Load, is a method for combining data from different sources into a single format. Here are the stages of ETL during web scraping:

Extract:

In this stage, we use web scraping to collect data from many websites and sources. You have the option to choose form various formats, such as CSV, JSON, or Excel.

Transform:

The extracted data is normalized, gathered and restructured during the transformation process. By completing this step, you can be sure that the data is uniformly formatted and ready for analysis or integration with other datasets.

Load:

The converted data is then imported into the destination system or database as the last step. An internal data warehouse and APIs may be used for this.

Applying ETL principles to web scraping helps you streamline the integration of scraped data into your existing systems and workflows, enabling efficient analysis and decision-making.

Real-world applications of web scraping

Unlike internal data of any organization or engagement, web or external data generally shares insight on the market and environment surrounding the dataset. You could check our listing of various web data applications here. These are a few highlighting examples of applications that global enterprises capitalize on with web scraping.

Price Monitoring

Enterprises use web scraping to monitor competitor prices and adjust their pricing strategy accordingly. E-commerce companies often build automation algorithms for pricings that take web data as the source indicators.

Market Research

Web scraping gives companies the ability to gather market intelligence, assess customer sentiments and identify new market trends. Customer reviews and Q&A data, competitor product catalogs, social media data, and historical pricing data are often collected to perform market research and measure trends.

Lead Generation

Web scraping is used to extract contact information from websites and generate leads for sales and marketing purposes. What you would generally look for is the digital footprint that your target audience leaves behind when they have an intent to purchase.

Academic Research

Web scraping is used by researchers (pursuing their research papers) to gather web data at scale for study and to get new perspectives on a variety of topics.

In conclusion

Web scraping is a powerful technology that helps uncover insights hidden across the web. Utilizing web scraping tools to their full potential while adhering to standard practices can enable you to obtain insightful data, make wise choices, and gain the competitive advantage you are looking for.

Automating web data extractions offers countless opportunities for data-driven analysis and integration, while saving a significant amount of time that might have been wasted collecting data manually.

Harness web scraping’s strength and tap into the infinite possibilities hidden within the enormous digital world with Grepsr.

Launch your web scraping adventure right away and explore the wealth of data that is just waiting to be found!

FAQs

What is web scraping to Excel?

Web scraping to Excel is the automated process of extracting data from websites and exporting it directly into Excel spreadsheets for easy analysis and reporting.

What are the best ways to scrape data to Excel?

Three main methods:

- Python (Beautiful Soup + Pandas): Best for developers needing custom logic

- Browser Extensions: Point-and-click tools (no coding) for simple sites

- Managed Services (e.g., Grepsr): Fully automated, scalable, and deliver cleaned Excel files directly

Managed services reduce data acquisition costs by 85% compared to in-house teams.

How does web scraping work?

- Send HTTP requests to websites

- Parse HTML using CSS selectors or XPath

- Extract desired data

- Export to Excel (or CSV/JSON)

Example: Price monitoring scrapers check competitor pages every 6 hours and update Excel automatically.

What are the top business uses of web scraping to Excel?

- Price Monitoring: Track competitor pricing

- Market Research: Collect reviews and trends

- Lead Generation: Extract contact details

- Competitive Intelligence: Monitor competitor catalogs

Many e-commerce companies use scraped data to power automated pricing algorithms.

What is ETL in web scraping?

ETL (Extract, Transform, Load):

- Extract: Scrape data from websites

- Transform: Clean and normalize the data

- Load: Import into Excel, databases, or APIs

ETL makes scraped data usable across business systems.

Can I scrape to Excel without coding?

Yes. Use browser extensions like Pline for quick jobs or managed services like Grepsr for large-scale, ongoing extraction. These services deliver ready-to-use Excel files on your schedule and handle all technical issues (site changes, CAPTCHAs, IP rotation) automatically.