Every system you come across today has an API already developed for their customers or it is at least in their bucket list. While APIs are great if you really need to interact with the system but if you are only looking to extract data from the website, web scraping is a much better option. We discuss some of the benefits of web crawling over use of an API.

Availability

You might think that most of the sites have an API today. Yes, they do but more often than not there are many limitations on the data that is available through the API. Even if the API provided access to all the data, you would have to adhere to their rate limits.

APIs are normally a neglected part of the system. A website would make changes to its website but the same changes in the data structure would reflect in the API months later.

Also, while sites are very concerned about the uptime and availability of the website because it caters to their most important customers, unavailability and downtime at the API endpoint sometimes goes unnoticed for days.

Up-to-date

APIs tend to update very slowly because they are normally at the bottom of the priority list. The data served by the API may sometimes be old too. Instead, when you scrape the content off the website, you get what you see. You can easily verify this data. There is more accountability on easier tests that can be done on the data collected through web scraping. You can easily compare it with what you actually see on the site.

Rate Limits

Most APIs will have their own rate limits. These rate limits are normally based on time. The time between two consecutive queries, the number of concurrent queries, and the number of records returned per query. Web scraping in general does not have any limits. As long as you are not hammering the site with hundreds of concurrent requests, the sites will not normally ban you.

Website will serve you CAPTCHA and other forms of verification if you are too harsh on them. Scraping a site with CAPTCHA can be tricky, but today, there is a solution for that too.

Better Structure

Navigating through a badly structured API can be very tedious and time consuming. You might have to make dozens of queries before getting to the actual data that you need.

Websites nowadays have a better structure than they have ever had. With every site wanting to be XHTML validated in order to fare better rankings on search engines, the structure of the websites today is clean and easy to scrape. Increasing use of JSON, JSONP, XML and Microdata in the systems have further structured the data used on websites.

Earlier methods in scraping involved complex regular expressions and relying heavily on how the tags were laid out on the site. Although the use of regular expressions is not completely removed, availability of queries at the XPath and DOM levels have made this much easier.

Scraping websites with AJAX might look daunting at first, but they turn out to be easier than scraping content from a normal HTML. These AJAX endpoints normally return structured data in a clean JSON or XML.

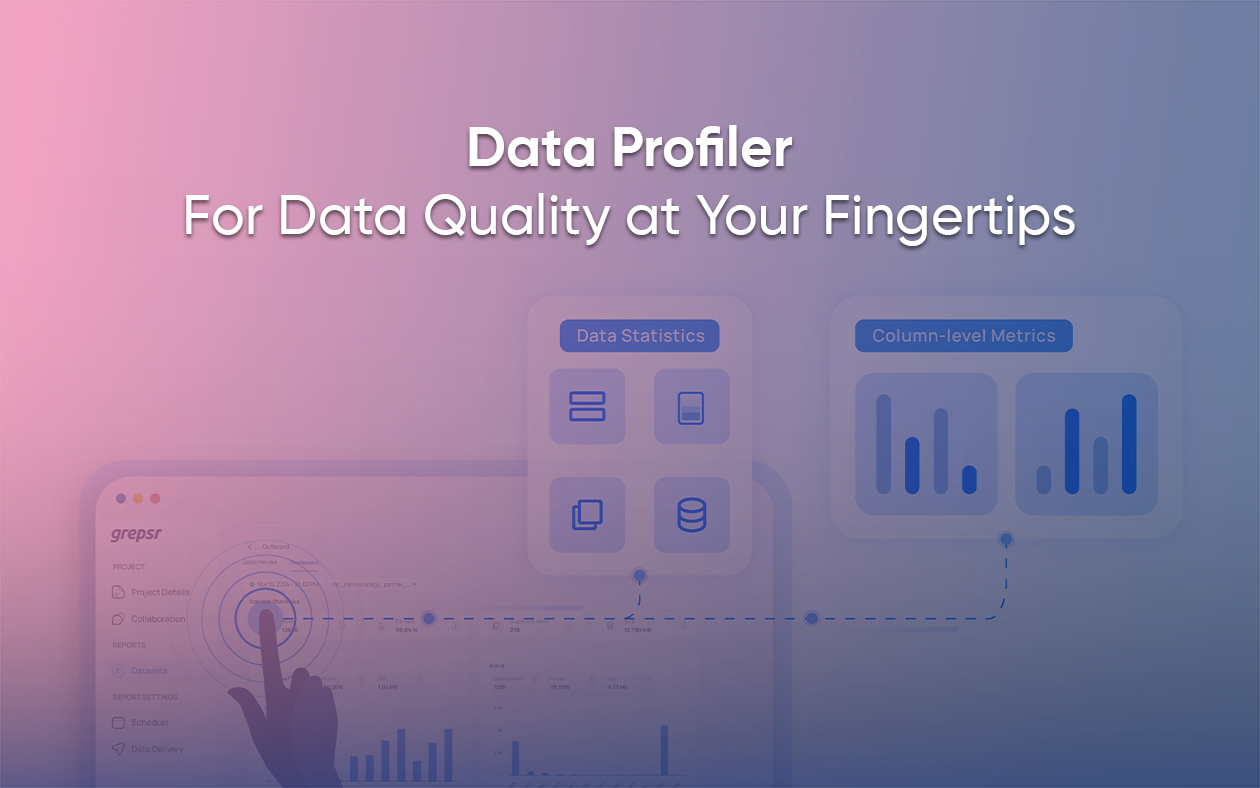

Getting started with Web Scraping

There are many frameworks available to you to start with your own small projects. However, dealing with large volumes of data in a scalable manner can be tricky in web scraping. There are also various tools that let you point and get data. However, they are not normally very easy to use or have very poor data quality.

If you just want to start with web crawling without getting your hands dirty, you can try a web scraping service like (Grepsr) where we provide data as a service.