Personalization is one of those things customers rarely describe directly, but they feel it instantly. The store that “gets them” wins more add-to-carts, more repeat purchases, and more word of mouth. The store that does not feel noisy, repetitive, and forgettable.

For data scientists, product managers, and marketing teams, the real work starts with e-commerce personalization data. When you combine strong first-party signals (what users do on your site) with responsibly collected web data (what the market is doing outside your site), you can build recommendations and offers that stay relevant, even when customer tastes and competitive landscapes change fast.

This guide explains what personalization really means in e-commerce, how to collect behavior and preference data, how scraped data supports recommendations, how to integrate real-time signals for personalized offers, and how to balance personalization with user privacy.

What does personalization mean in e-commerce?

E-commerce personalization is the ability to adapt what shoppers see based on context and intent. That could mean:

- the products shown on the homepage

- the order of categories or collections

- “recommended for you” carousels

- personalized marketing messages, like email or push notifications.

- offers that match a shopper’s stage, not just their demographic

The goal is not to “show different content for everyone.” The goal is to reduce decision fatigue and help people find the right product faster.

Collecting user behavior and preference data

Most high-performing personalization systems start with first-party data, meaning data collected from your own platform with clear user notice and consent where required.

Behavior data you can collect on your own platform

This is where people usually mean user behavior scraping, but a healthier way to think about it is “event tracking from my own product.” Typical signals include:

- product views, category views, and search queries

- add-to-cart, remove-from-cart, and wishlist events

- purchases, returns, and cancellations

- time spent, scroll depth, and session patterns (used carefully)

- email clicks and campaign attribution

These events power both recommendations and segmentation.

Preference data that makes personalization feel human

Behavior tells you what users did. Preference tells you why.

- size, color, and price-range preferences

- brand affinity

- explicit “I like this” and “not interested” feedback

- short quizzes (style, use-case, goals)

- post-purchase review tags (fit, comfort, durability, value)

Preference data is also the easiest way to reduce “creepy personalization,” because users are telling you what they want.

What scraped data can add to personalization

Scraped data is most valuable when it fills gaps that your first-party data cannot cover, especially gaps in market context.

Product and catalog signals from the open web

Scraped data can support:

- competitor assortment changes (what is trending in your category)

- price bands and promotion patterns by season

- emerging attributes and feature language competitors highlight

- availability signals that indicate demand surges

Used correctly, this helps product managers prioritize what to feature and helps models understand what is “hot” right now.

Reviews, ratings, and content signals

Public review ecosystems often contain rich signals about why people buy or avoid certain products. That can support:

- better product ranking within a category page

- “recommended because” explanations (durability, comfort, value)

- smarter bundling (items commonly mentioned together)

- personalized marketing angles (what benefits matter to each segment)

Important note: Avoid collecting personal data from third-party platforms. Focus on aggregate, non-identifying signals and follow site terms and applicable laws.

Building recommendation systems with scraped data

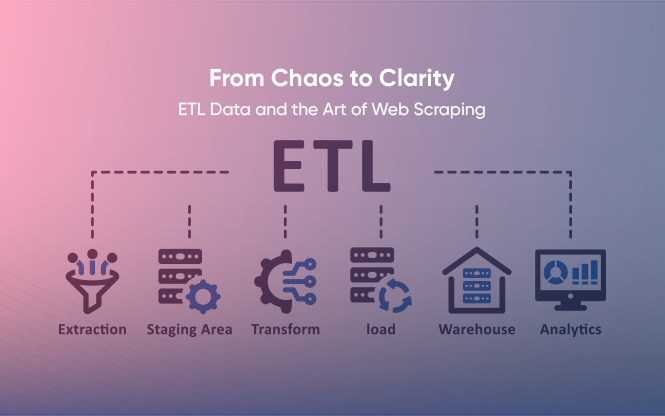

A product recommendation engine usually has three layers: candidate generation, ranking, and post-processing.

Candidate generation: what could we recommend?

Common approaches:

- collaborative filtering (users who behave similarly tend to buy similar items)

- item-to-item similarity (products frequently viewed or bought together)

- content-based candidates (similar attributes, category, price band, style)

Scraped data helps by enriching item attributes and market context, especially when your internal catalog lacks consistent metadata.

Ranking: what should we recommend right now?

The ranking determines which candidates to show first. Inputs often include:

- user intent signals (recent searches, recent category views)

- popularity and velocity (what is surging today, not last month)

- margin and inventory constraints (do not recommend items you cannot fulfill)

- price sensitivity signals (ties back to elasticity-like behavior)

This is where “web + first-party” becomes powerful. Your system can see both the shopper’s intent and the outside market reality.

Post-processing: business rules that protect trust

Even a good model can hurt the experience if it ignores basic rules:

- deduplicate near-identical items

- avoid recommending out-of-stock products

- enforce diversity (not 10 black sneakers in a row)

- apply fairness and brand constraints (do not over-personalize into a narrow bubble)

Integrating real-time data for personalized offers

Personalization improves noticeably when it responds to what a shopper is doing right now.

Practical real-time inputs include:

- current session behavior (search terms, product comparisons)

- cart contents and cart abandonment moments

- price drops or restocks (triggering timely messages)

- short-lived promotions (show offers only when valid and relevant)

From a systems view, teams often implement:

- a streaming event pipeline for session signals

- a feature store for consistent model inputs

- a near-real-time ranking service so recommendations update quickly

This is how you move from “recommendations based on history” to “recommendations based on intent.”

Balancing personalization with user privacy

Personalization works best when customers trust you. That trust breaks quickly if tracking feels hidden or invasive.

A practical privacy-first approach:

- collect only what you need, not everything you can

- clearly explain what you collect and why

- Give users controls (opt out, reset personalization, manage preferences)

- Avoid using sensitive attributes for targeting

- separate identity from behavior where possible (pseudonymize)

- treat third-party scraped data carefully, focusing on market-level signals instead of individual user data

If you are personalizing across regions, align your data practices with relevant regulations and your own compliance team’s guidance.

Benchmarking industry trends with scraped data

Your personalization strategy should not live in a vacuum. One of the smartest uses of web data is to benchmark industry trends with scraped data, such as:

- how competitors position products (feature claims, bundles, naming)

- category-level price movement and promo timing

- What “new normal” looks like for shipping promises and returns

- fast-rising subcategories that deserve a new collection or landing page

This is especially helpful for product managers deciding what to build next and for marketing teams deciding what to emphasize.

Examples: Netflix and Amazon’s personalization techniques

Netflix is often associated with personalization because the entire discovery experience is shaped by your behavior, not just a “recommended” section. It learns what you watch, what you abandon, and what you binge.

Amazon is the classic e-commerce example because personalization shows up everywhere: recommendations, “frequently bought together,” search ranking, and category merchandising. The system is not only learning “what you like,” it is balancing fulfillment, pricing, and availability so the suggestions stay practical.

You do not need Netflix-level complexity to benefit. Even small improvements, such as better item-to-item recommendations and smarter triggered offers, can meaningfully boost revenue.

How Grepsr supports personalization and recommendation workflows

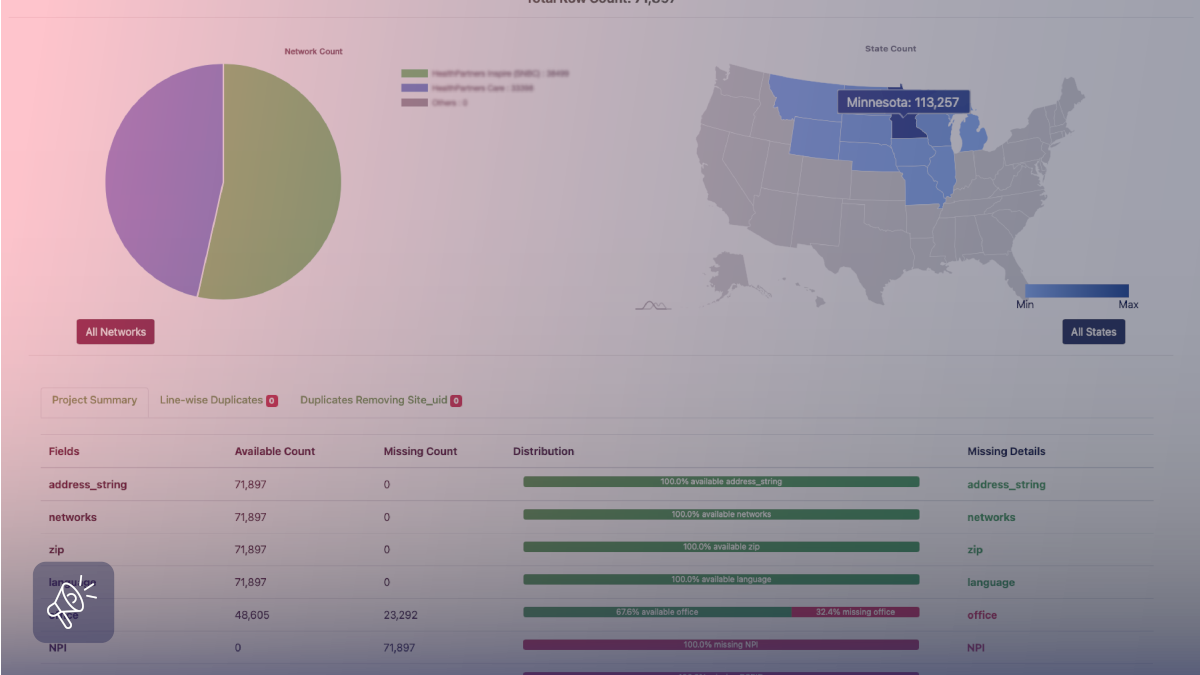

Personalization pipelines break when product data is inconsistent, incomplete, or stale. Grepsr keeps the foundation reliable by delivering structured product and market datasets as a managed feed through its Services model, so your recommendation logic is not learning from half-filled attributes or yesterday’s market.

That means you can enrich your catalog with external product metadata (so cold-start items still get placed correctly), bring in competitive and trend signals to improve ranking context, and run refresh cycles to keep recommendations aligned with what is actually available right now. Grepsr’s guide on feeding recommendation engines with web data explains why external metadata and market signals improve recommendations beyond internal-only logs.

For teams that need proof at scale, Tradeswell’s customer story shows how Grepsr delivered fresh Amazon product data across hundreds of categories multiple times a day, which is exactly the kind of consistent input stream ML-driven merchandising and recommendations depend on. And when you want to align fields, variant logic, and outputs with your model requirements, Grepsr’s work on data normalization is a useful reference point for how clean attributes stay consistent across sources.

If you want to map this to your catalog structure, data sources, and refresh frequency, start here.

FAQs

What is e-commerce personalization data?

It is the set of signals used to personalize the shopping experience, typically combining first-party behavioral data, preference data, catalog data, and, sometimes, market context data.

Can scraped data improve a product recommendation engine?

Yes, especially by enriching product attributes, adding market context, and helping benchmark trends, as long as the data is collected responsibly and does not violate privacy or terms.

What is user behavior scraping in e-commerce?

In practice, it usually refers to capturing user interaction events. The safest approach is tracking events on your own platform with clear user notice and consent where required.

How do you integrate real-time signals for personalized offers?

By collecting session events, updating features quickly, and using a near-real-time ranking layer that can change recommendations and offers based on current intent.

How can teams balance personalization with user privacy?

Collect only what is necessary, keep practices transparent, offer user controls, avoid sensitive targeting, and ensure compliance processes are built into the data pipeline.