Your product catalog is not just a list of items. It is the system customers use to decide whether to trust you, buy from you, and come back.

When catalogs go wrong, the damage is immediate: wrong prices, missing variants, duplicate listings, out-of-stock items still showing as available, and “almost the same” products scattered across categories. For e-commerce managers, data engineers, and catalog teams, this is why e-commerce product catalog scraping is no longer only a growth tactic. It is a quality-and-operations tactic.

In this guide, we will break down how product data extraction works in real-world catalog workflows, how to manage variations and multiple SKUs, how to normalize data across suppliers, how to build an automated catalog update pipeline, and how to keep data consistent and unique at scale. We will also show how to leverage web data for market research without turning your catalog into a messy dataset.

Why product catalog management matters more than most teams expect

Catalog quality directly impacts:

- conversion rate (customers abandon when details conflict)

- returns and support tickets (wrong specs lead to wrong purchases)

- SEO and discoverability (thin, duplicated, inconsistent product pages struggle)

- marketplace performance (mis-mapped SKUs break buy box eligibility and rankings)

- supply chain decisions (bad inventory signals create overstock or stockouts)

The hardest part is that catalog issues rarely stem from a single big mistake. They come from hundreds of small mismatches: a variant that did not update, a supplier feed with different naming, a pack-size change that looks like the same product, or an outdated image set.

This is exactly where structured scraping and validation can turn daily firefighting into a predictable system.

What to scrape for a strong catalog foundation

When teams hear “scraping,” they often think “price.” But catalog accuracy needs a broader capture.

Product details and images

Scraping product details and images usually includes:

- product title, short description, long description

- brand, model, and product identifiers (GTIN/EAN/UPC where available)

- key attributes (size, material, color, capacity, compatibility)

- feature bullets, box contents, warranty notes

- image URLs and alt text, plus available gallery positions

Images matter because they help validate matches. If your “same product” has a completely different image set, it is often not the same product.

Price and promotion context

For pricing and merchandising, you want more than “current price”:

- list price vs sale price

- coupon indicators or stacked offers (where visible)

- shipping cost and delivery promise (these change conversion)

- seller identity (brand vs reseller vs marketplace seller)

Stock and availability signals

For inventory scraping, focus on what customers actually see:

- in-stock/out-of-stock status

- low-stock hints (limited quantity messages)

- delivery date shifts that imply constrained inventory

- backorder or preorder signals

Stock signals are messy across sites, so consistency in how you interpret them matters more than trying to capture everything.

Handling variations and multiple SKUs without breaking your catalog

Variants are where catalogs go off-track. The fix is not “more fields.” The fix is a clean product structure.

A reliable approach is to model catalog entities like this:

- Product (parent): the main item concept (for example, “Nike Air Zoom Pegasus 41”)

- Variant (child SKU): the purchasable unit (size 9, black, men’s)

- Offer (seller listing): who sells it, at what price, with what shipping and stock

This prevents common failures like:

- Treating every color as a separate product (duplicated SEO pages)

- mixing offer-level details (seller, shipping) into product-level attributes (material, size chart)

- merging different pack sizes into one SKU (pricing and return disasters)

From a scraping perspective, it also changes what you extract. You are not just scraping pages. You are scraping relationships: parent-to-variant, variant-to-offer.

Normalizing data across suppliers

Even when two suppliers sell the same product, they describe it differently. One says “Midnight Black,” another says “Black,” another says “Jet Black.” One uses “1L,” another uses “1000 ml.”

Normalization is the catalog team’s quiet superpower.

Build a controlled vocabulary

Create standardized values for:

- colors, sizes, units, materials

- gender/age segments where relevant

- category paths and attribute names

Then map every supplier value into your standard.

Standardize measurement units early

Convert units consistently:

- cm vs inch

- ml vs L

- grams vs kg

This one step eliminates a surprising amount of duplicate listings.

Make identifiers do the heavy lifting, but do not rely on them blindly

GTIN/EAN/UPC codes are helpful, but they are not always present or clean. Use them when available, but support them with fallback matching:

- title similarity + attribute match

- brand/model match

- image similarity checks for high-value items

This is where product data extraction goes beyond copying fields. It becomes creating trustworthy entities.

Automating price and stock updates the right way

An automated catalog update pipeline should feel boring. It should run, validate, update, and log.

A practical workflow looks like this:

Step 1: Schedule by volatility

Not every SKU needs the same refresh:

- high-velocity SKUs: multiple times a day

- stable categories: daily

- slow-moving categories: weekly, with event-based refresh during campaigns

Step 2: update only what changed

Instead of rewriting the whole catalog each run, use deltas:

- price change events

- stock status changes

- attribute updates (rare, but critical when they happen)

Step 3: Protect your catalog with guardrails

Before publishing updates, run checks like:

- sudden price drop beyond threshold

- stock flip-flopping too frequently

- missing critical attributes compared to the last valid version

- duplicate SKU creation triggers

This reduces “bad data moments” that cause customer complaints and internal chaos.

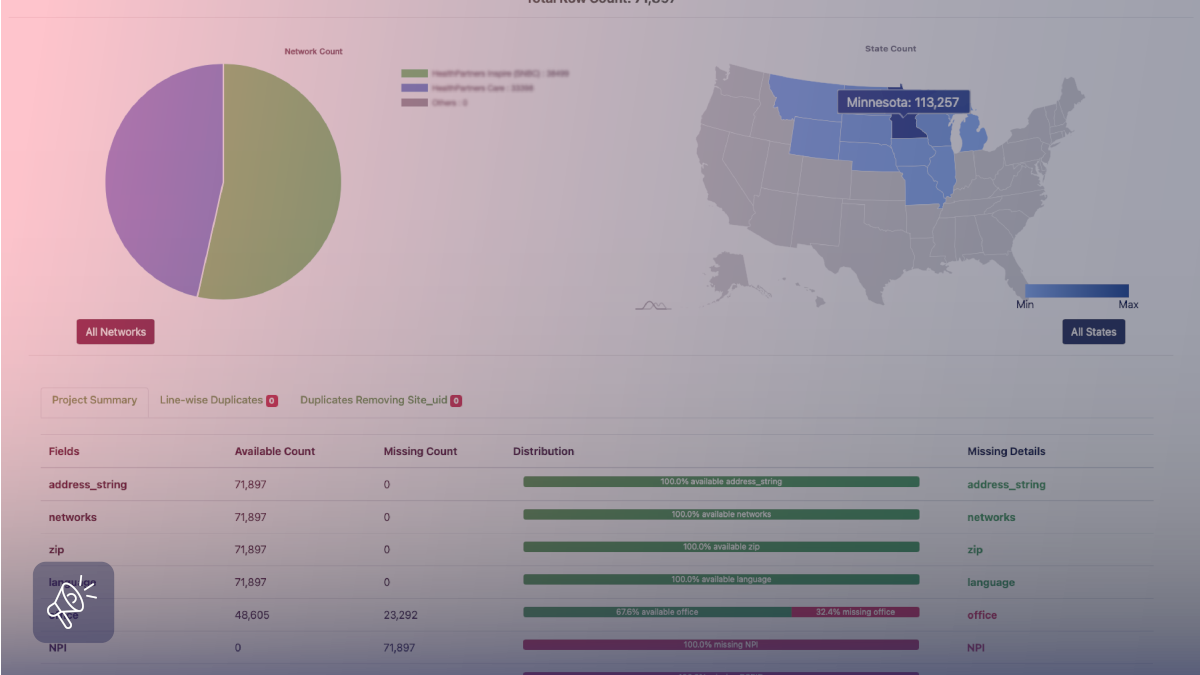

Ensuring data consistency and uniqueness

Catalog quality is mostly a deduplication and identity problem.

Create a uniqueness strategy

Decide what makes a SKU unique:

- SKU = (product parent) + (variant attributes)

- offer = SKU + seller + region + fulfillment type

Then enforce it, so duplicates cannot be created by accident.

Use versioning and audit logs

When a field changes, record:

- old value, new value

- source and timestamp

- reason code (price scrape update, supplier feed update, manual correction)

This is essential when teams ask, “Why did the price change?” or “When did this image update?”

Put “human review” where it pays off

Not every mismatch needs a person. But some do:

- bestsellers

- expensive items

- regulated categories

- products that drive returns or complaints

Create a review queue so humans focus on high-impact exceptions rather than routine updates.

Using catalog scraping to support market research

Catalog pipelines can do more than maintain product pages. They can help you leverage web data for market research by identifying:

- emerging product features competitors are highlighting

- price bands and promo patterns by category

- new entrants and fast-growing brands

- assortment gaps (what customers can buy elsewhere but not from you)

The key is separation. Keep “market research data” as an analytics layer, not as direct catalog truth, unless it passes the same validation rules.

How Grepsr supports product catalog management and scraping

Catalog teams do not fail because they lack ambition. They fail because sources change constantly, and the moment one marketplace or competitor tweaks a layout, the whole pipeline starts leaking bad data into your feeds, listings, and reporting.

Grepsr keeps catalog operations stable by running product catalog and change monitoring as a managed workflow, so you can scrape at scale across multiple sources while still keeping a clean structure across product, variant, and offer layers. When you need catalogs to merge cleanly across channels, this becomes a normalization problem as much as a scraping problem, which is why Grepsr also focuses on SKU and attribute consistency in pipelines like catalog aggregation + SKU normalization. At scale, the same approach has powered high-frequency product extraction for platforms like Tradeswell, where fresh product data across hundreds of categories had to arrive reliably multiple times a day. If you want to map this to your exact sources, fields, refresh schedule, and delivery format, the clean starting points are Grepsr’s Services overview and their Contact Sales intake.

Conclusion

Catalog management becomes easier when you stop treating it like manual maintenance and start treating it like an engineered system.

With the right scraping strategy, strong normalization, and updated guardrails, you can keep product pages accurate, pricing current, and inventory signals reliable. That improves customer trust, reduces returns, and gives your teams time to focus on growth instead of constant fixes.

If your catalog is growing across suppliers, marketplaces, or categories, the next step is to build a stable data pipeline that refreshes, validates, and publishes updates without drama. Grepsr can help you put that system in place.

FAQs

What is e-commerce product catalog scraping?

E-commerce product catalog scraping is the process of collecting product data from online sources to build or maintain a structured catalog, including attributes, images, pricing, and availability.

How is product data extraction different from price monitoring?

Product data extraction focuses on the full product record (attributes, descriptions, images, variants). Price monitoring focuses mainly on price and promotions, often at the offer level.

How do I handle variations and multiple SKUs correctly?

Model your catalog as product parents, variant SKUs, and seller offers. Variants should be tied to attribute combinations (size, color, pack) so you avoid duplicates and mismatches.

What should an automated catalog update pipeline include?

Scheduled collection, normalization, validation checks, delta updates, and audit logs so every change is traceable and reversible if needed.

How can inventory scraping improve operations?

Inventory scraping helps you detect stockouts early, adjust merchandising, trigger reorder decisions, and reduce cases where customers see in-stock items that are not actually available.